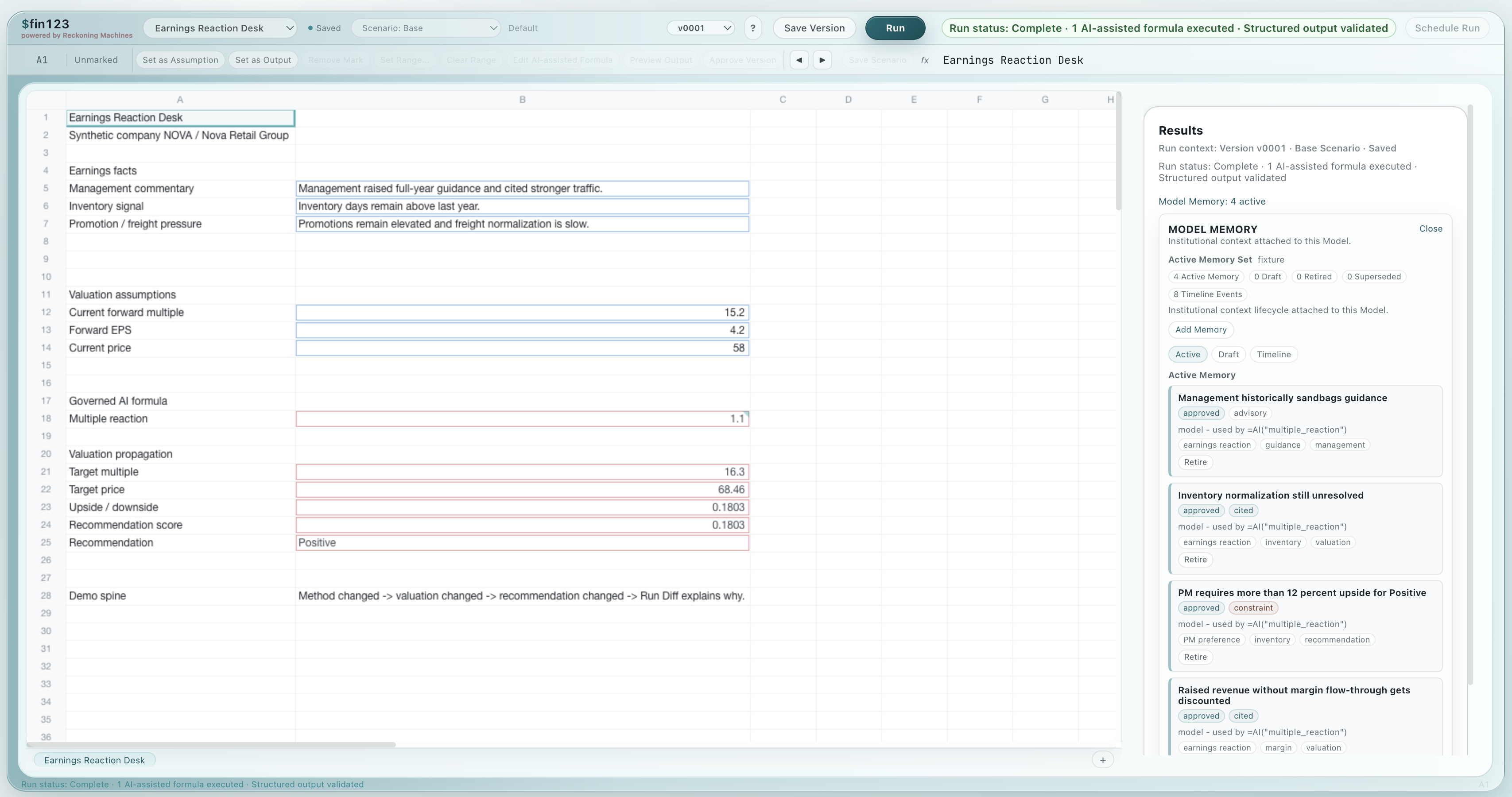

AI that participates in the model.

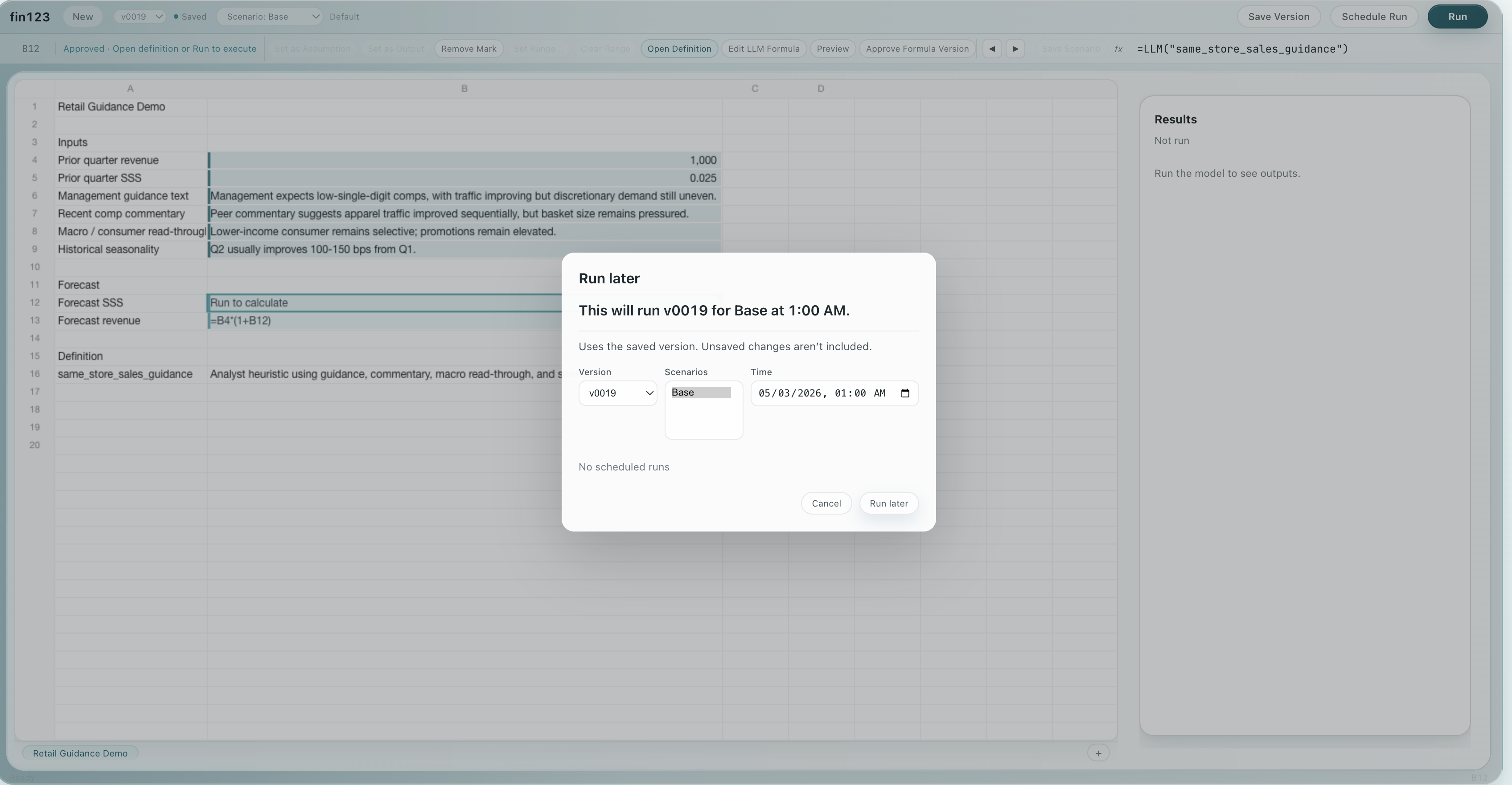

Build in the grid. Branch the work. Run the model. Explain what changed. Replay the

decision.

AI built in. Audit built in. No black box.

One approved model can be versioned (“Desk” vs. “House”), run across many

companies and/or with many scenarios, tracking versioned lineage, approved data,

approved AI, replay, and Audit behind every result. AI is native, diffing is native,

versioning is native.

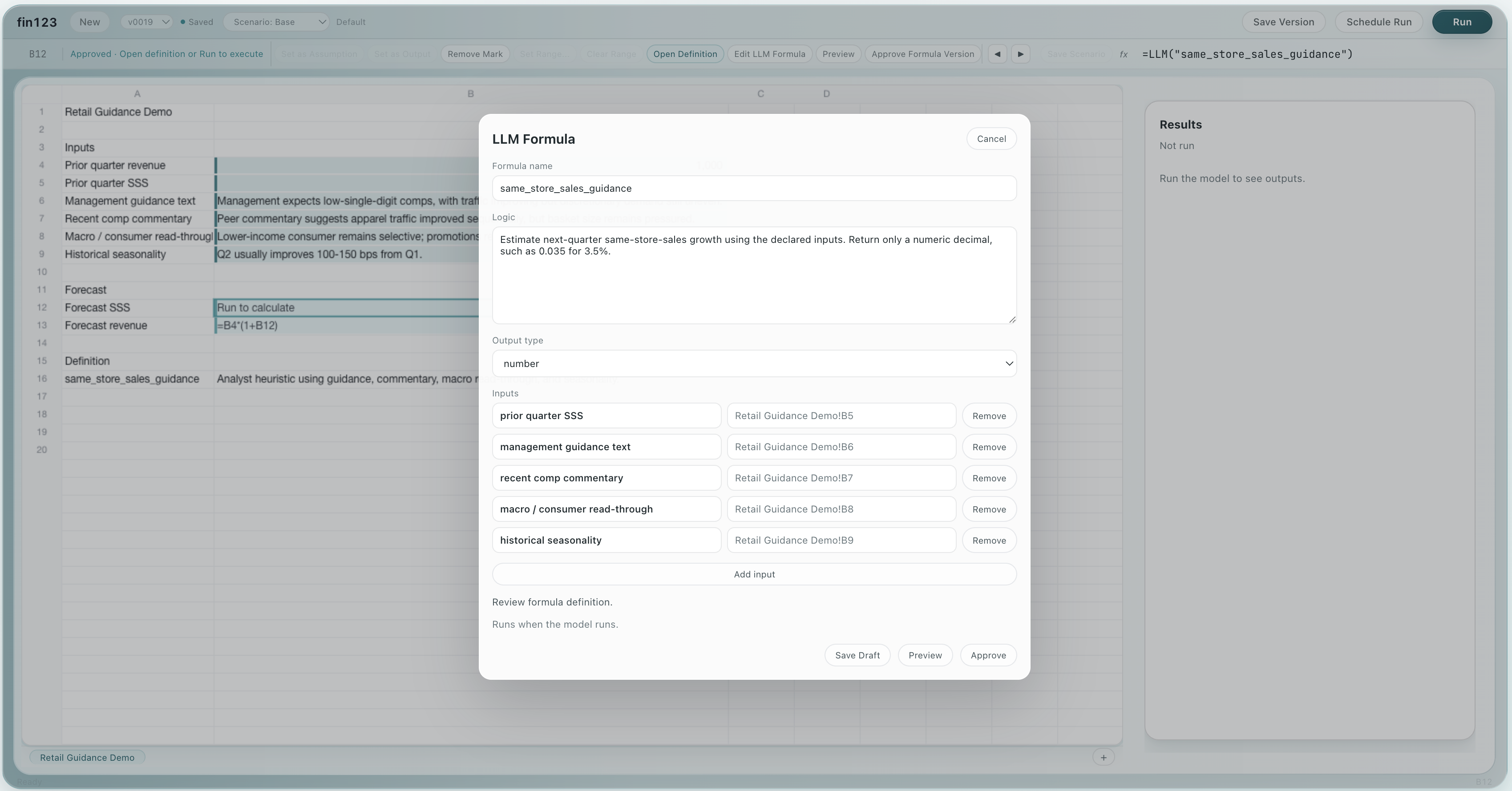

Build in the grid. Mark Assumptions and Outputs

directly in the spreadsheet your team already knows.

Keep work organized by lineage. Branch analysis,

promote reviewed work, and return working models to a known-good state without

filename sprawl.

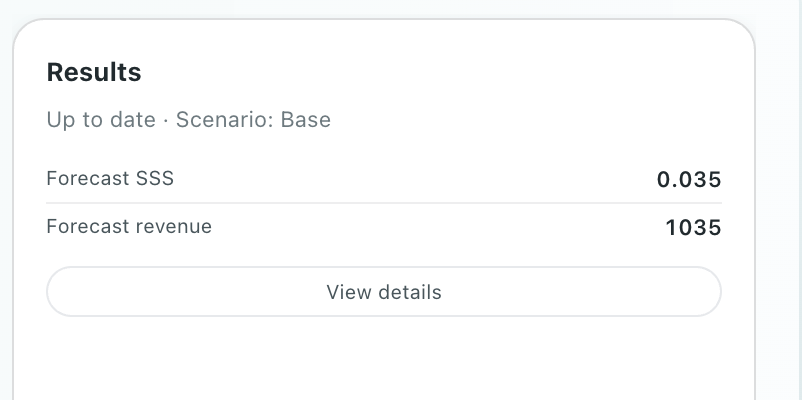

Run one model across the coverage universe. Each

company keeps its own assumptions, data, Runs, Results, Audit, and replay context.

Diff runs, not just spreadsheets. Compare

Scenarios, Sensitivity Cases, and Results side by side.

Connect approved data directly in the grid.

=DATA("bank.net_interest_income") maps business concepts to approved

internal and external data through one formula interface.

AI workflows run inside the model.

=AI() formula can execute reviewed Methods: chained analysis, approved

context, validation, typed output, Model Memory, YAP chronology, and Audit. Inside

the model.

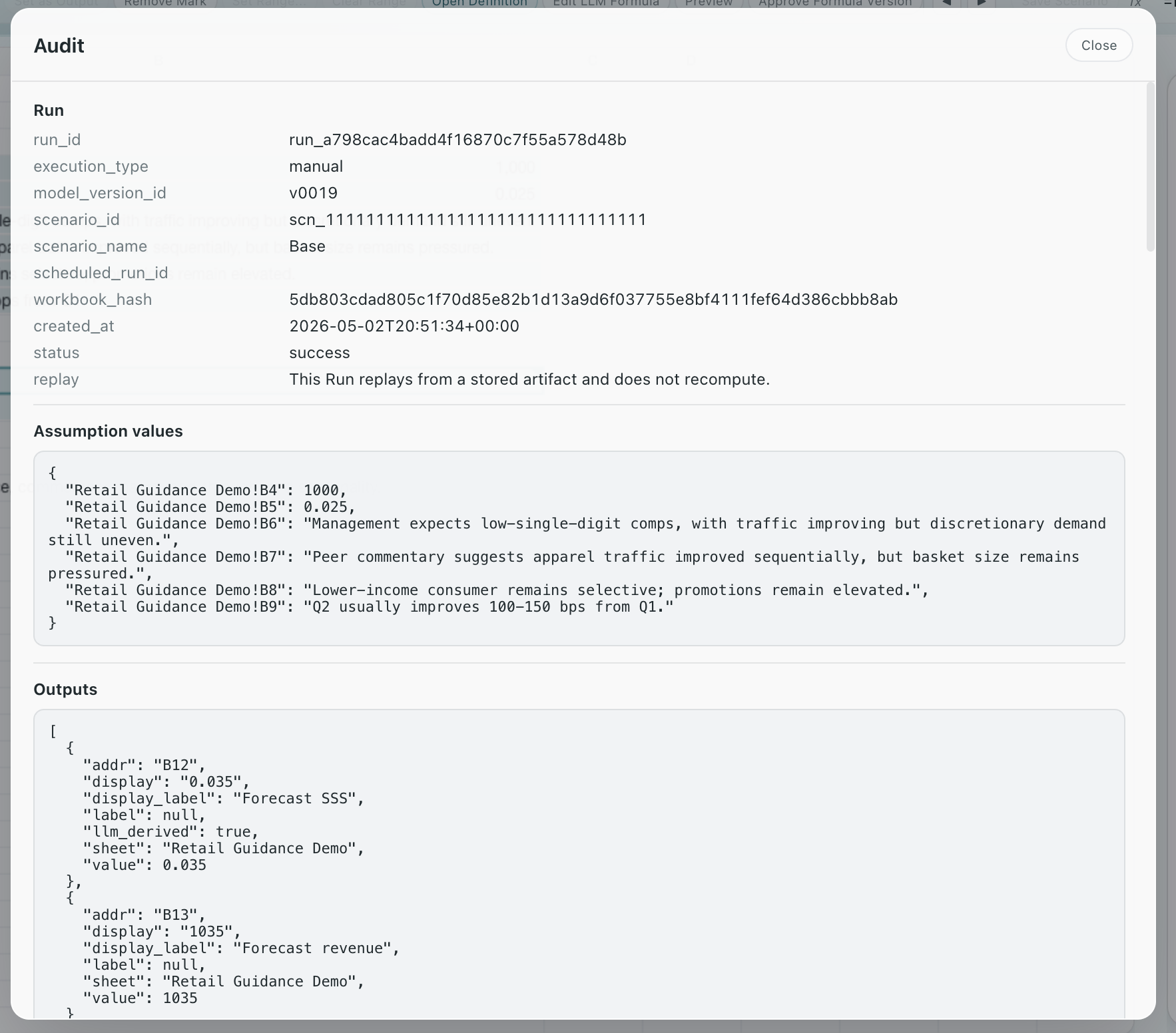

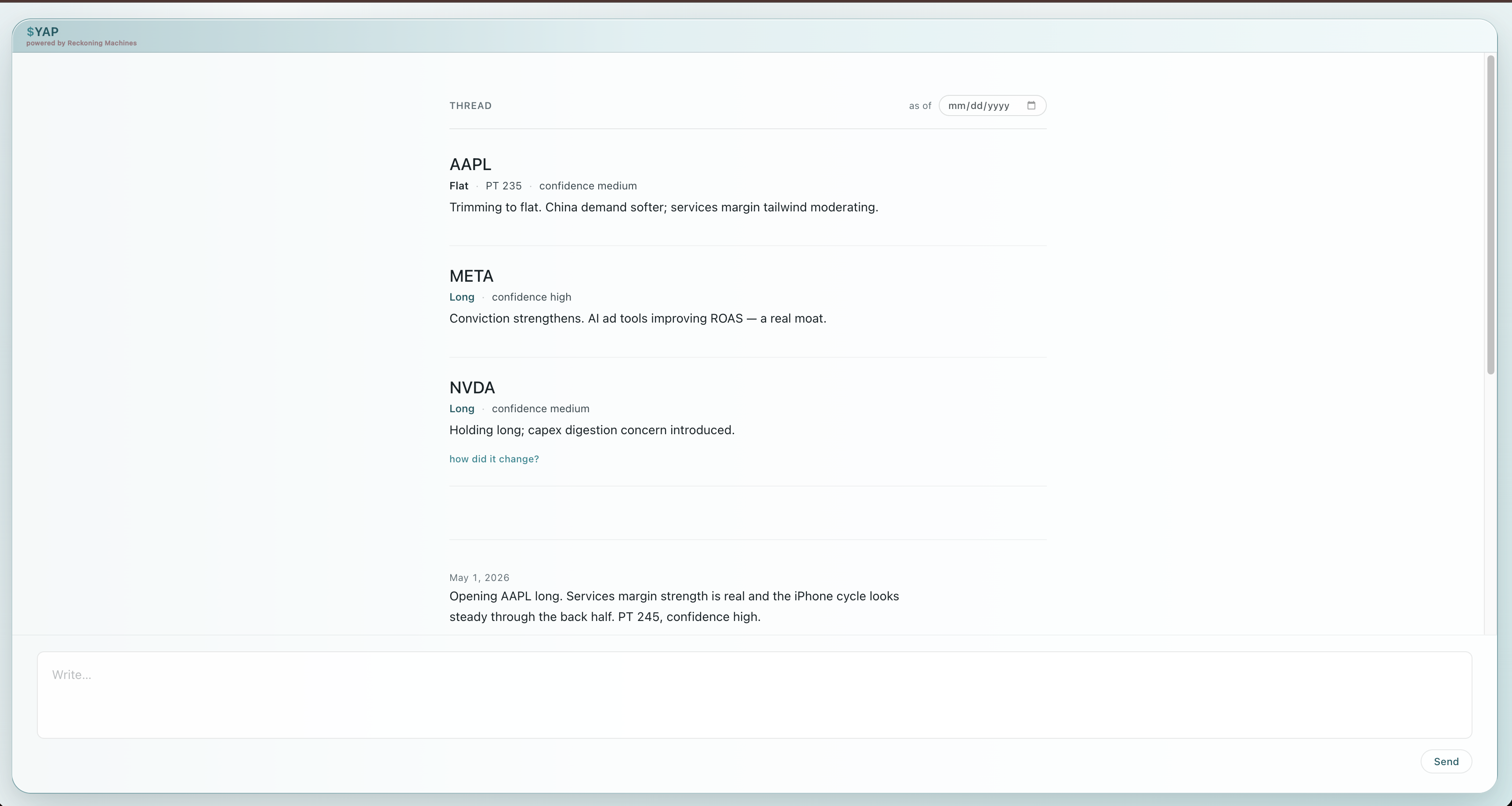

Replay historical decisions. Reconstruct models

with the model state, data, provider evidence, AI method, memory, chronology, and

time rules that applied then.

Audit every result. See what ran, what changed,

which evidence was used, who approved it, and why the result was valid at that point

in time.

Sheet Warnings. Every Run checks for errors

before they become problems. See what formulas may have drifted, hardcodes replaced

formulas, and other changes that need review. Spreadsheet linting for finance.